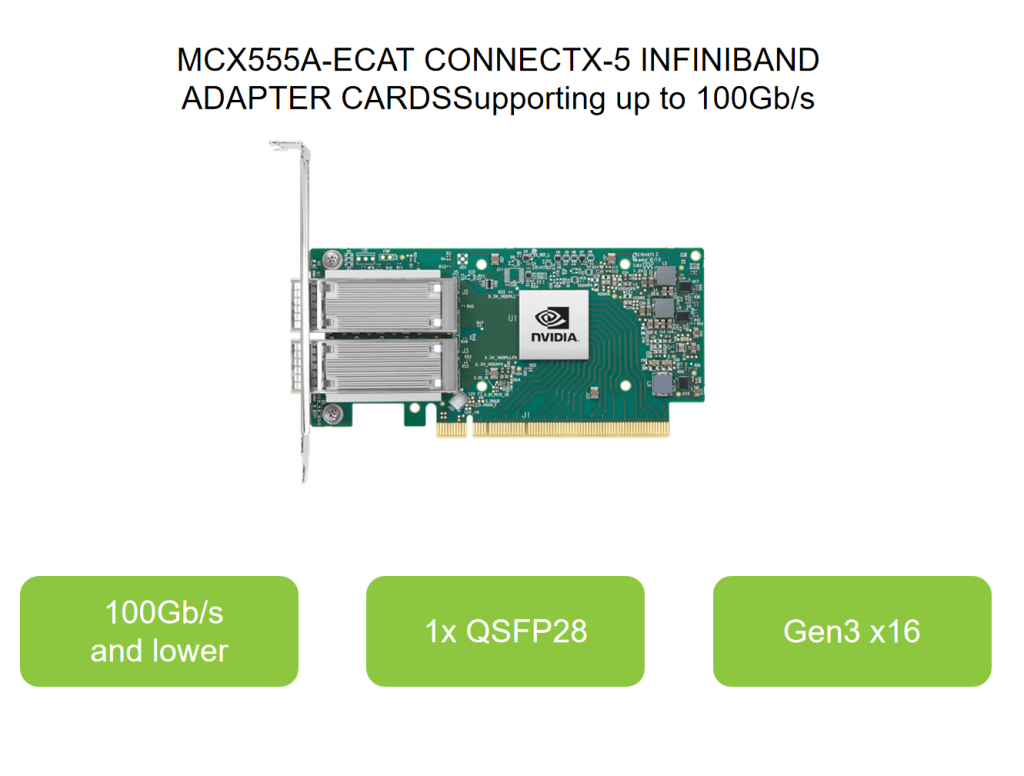

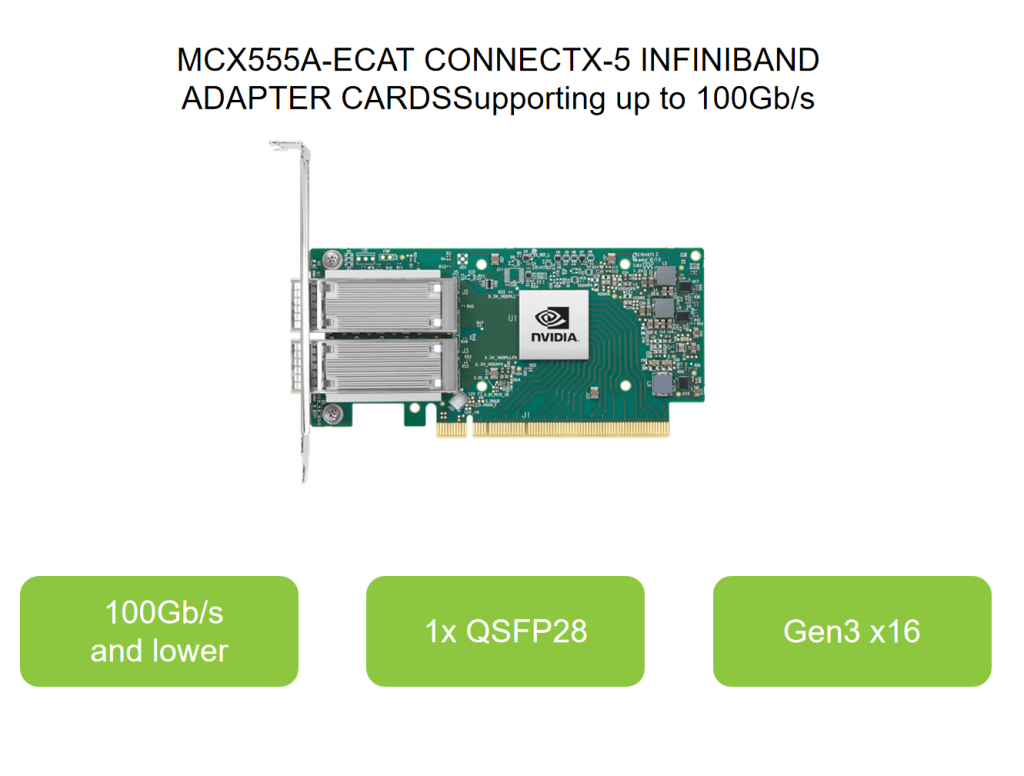

Mellanox network card MCX555A-ECAT ConnectX-5 EDR IB (100Gb/s) and 100GbE Single-Port QSFP28

MCX555A-ECAT ConnectX®-5 InfiniBand adapter cards provide a high performance and flexible solution with up to two ports of 100Gb/s InfiniBand and Ethernet connectivity, low latency, and a high message rate, plus an embedded PCIe switch and NVMe over Fabrics offloads.

MCX555A-ECAT These intelligent remote direct memory access (RDMA)-enabled adapters provide advanced application offload capabilities for high-performance computing (HPC), cloud hyperscale, and storage platforms. ConnectX-5 adapter cards for PCIe Gen3 and Gen4 servers are available as stand-up PCIe cards and Open Compute Project (OCP) Spec 2.0 form factors. Selected models also offer NVIDIA Multi-Host™ and NVIDIA Socket Direct technologies.

MCX555A-ECAT Specifications

| Model number |

MCX555A-ECAT |

MCX556A-ECAT |

MCX556A-ECUT |

MCX556A-EDAT |

InfiniBand Supported

Speeds |

100Gb/s and lower |

100Gb/s and lower |

100Gb/s and lower |

100Gb/s and lower |

Ethenet Supported

Speeds |

100GbE and lower |

100GbE and lower |

100GbE and lower |

100GbE and lower |

| Interface Type |

1x QSFP28 |

2x QSFP28 |

2x QSFP28 |

2x QSFP28 |

| Host Interface |

Gen3 x16 |

Gen3 x16 |

Gen3 x16 |

Gen4 x16 |

| Additional Features |

- |

- |

UEFI enabled |

ConnectX-5 Ex |

MCX555A-ECAT Key Features

> Tag matching and rendezvous offloads

> Adaptive routing on reliable transport

> Burst buffer offloads for background

checkpointing

> Embedded PCIe switch

> PCIe Gen4 support

> RoHS compliant

MCX555A-ECAT BENEFITS

2x400Gb/s

Quantum-2

InfiniBand or

Spectrum-4 Ethernet

switch-to-switch and

switch-to-DGX-H100

HPC Environments

ConnectX-5 offers enhancements to HPC infrastructures by providing MPI and SHMEM/PGAS and rendezvous tag matching offload, hardware support for out-of-order RDMAwrite and read operations, as well as additional network atomic and PCIe atomicoperations support.ConnectX-5 enhances RDMA network capabilities by completing the switch adaptive�routing capabilities and supporting data delivered out-of-order, while maintaining orderedcompletion semantics, providing multipath reliability, and efficient support for all networktopologies, including DragonFly and DragonFly+.ConnectX-5 also supports burst buffer offload for background checkpointing withoutinterfering in the main CPU operations, and the innovative dynamic connected transport(DCT) service to ensure extreme scalability for compute and storage systems.

Storage Environments

NVMe storage devices are gaining popularity, offering very fast storage access. TheNVMe over Fabrics (NVMe-oF) protocol leverages RDMA connectivity for remote access.ConnectX-5 offers further enhancements by providing NVMe-oF target offloads, enablinghighly efficient NVMe storage access with no CPU intervention, and thus improvedperformance and lower latency.Standard block and file access protocols can leverage RDMA for high-performancestorage access. A consolidated compute and storage network achieves significant cost�performance advantages over multi-fabric networks.

Adapter Card Portfolio

ConnectX-5 InfiniBand adapter cards are available in several form factors, including low�profile stand-up PCIe, Open Compute Project (OCP) Spec 2.0 Type 1, and OCP 2.0 Type 2.NVIDIA Multi-Host technology allows multiple hosts to be connected into a single adapterby separating the PCIe interface into multiple and independent interfaces.The portfolio also offers NVIDIA Socket Direct configurations that enable servers withoutx16 PCIe slots to split the card’s 16-lane PCIe bus into two 8-lane buses on dedicatedcards connected by a harness. This provides 100Gb/s port speed even to servers without ax16 PCIe slot.Socket Direct also enables NVIDIA GPUDirect® RDMA for all CPU/GPU pairs by ensuringthat all GPUs are linked to CPUs close to the adapter card, and enables Intel® DDIO onboth sockets by creating a direct connection between the sockets and the adapter card.

PCI Express Interface

> PCIe Gen 4.0, 3.0, 2.0, and 1.1 compatible

> 2.5, 5.0, 8, 16 GT/s link rate

> Auto-negotiates to x16, x8, x4, x2, or x1 lanes

> PCIe atomic

> Transaction Layer Packet (TLP) Processing Hints (TPH)

> PCIe switch Downstream Port Containment (DPC)

> Access Control Service (ACS) for peer to-peer secure communication

> Advanced Error Reporting (AER)

> Process Address Space ID (PASID)

> Address Translation Services (ATS)

> IBM CAPI v2 support (Coherent Accelerator Processor Interface)

> Support for MSI/MSI-X mechanisms

Operating Systems/ Distributions(1)

> RHEL/CentOS

> Windows

> FreeBSD

> VMware

> OpenFabrics Enterprise Distributio (OFED)

> OpenFabrics Windows Distribution (WinOF-2)

Connectivity

> Interoperability with InfiniBand switches (up to 100Gb/s)

> Interoperability with Ethernet switches (up to 100GbE)

> Passive copper cable with ESD protection

> Powered connectors for optical and active cable support