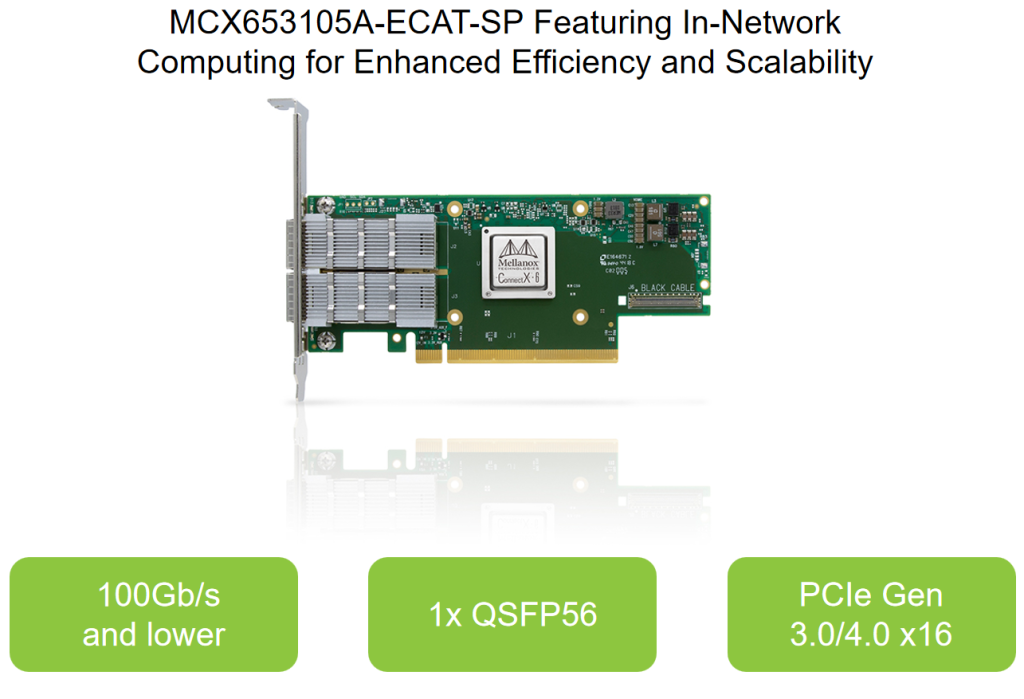

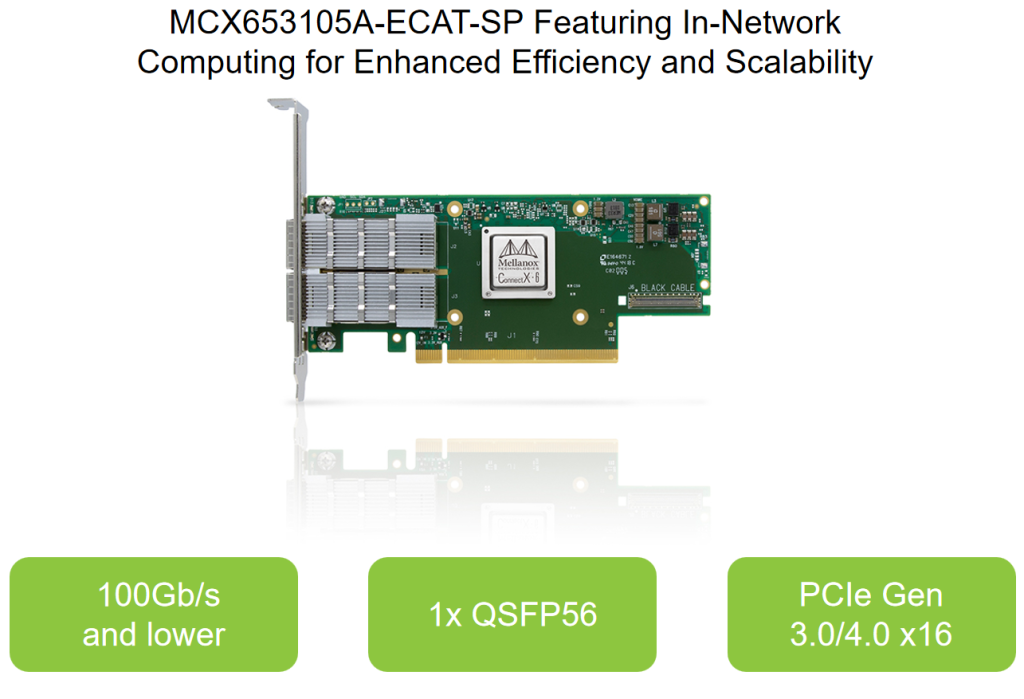

Mellanox network card MCX653105A-ECAT/MCX653105A-ECAT-SP ConnectX-6 VPI Adapter Card HDR100/EDR/100GbE

MCX653105A-ECAT-SP Complex workloads demand ultra-fast processing of high-resolution simulations, extreme-size datasets, and highly-parallelized algorithms. As these computing requirements continue to grow, NVIDIA Quantum InfiniBand—the world’s only fully offloadable, In-Network Computing acceleration technology—provides the dramatic leap in performance needed to achieve unmatched results in high performance computing (HPC), AI, and hyperscale cloud infrastructures—with less cost and complexity.

MCX653105A-ECAT-SP ConnectX®-6 InfiniBand smart adapter cards are a key element in the NVIDIA Quantum InfiniBand platform. ConnectX-6 provides up to two ports of 200Gb/s InfiniBand and Ethernet(1) connectivity with extremely low latency, high message rate, smart offloads, and NVIDIA In-Network Computing acceleration that improve performance and scalability.

MCX653105A-ECAT-SP Specifications

| Model number |

MCX653105A-ECAT |

MCX651105A-EDAT |

MCX653106A-ECAT |

MCX653105A-HDAT |

MCX653106A-HDAT |

InfiniBand Supported

Speed (Gb/s) |

100Gb/s and lower |

100Gb/s and lower |

100Gb/s and lower |

200Gb/s and lower |

200Gb/s and lower |

Ethernet Supported

Speed (Gb/s) |

100Gb/s and lower |

100Gb/s and lower |

100Gb/s and lower |

200Gb/s and lower |

200Gb/s and lower |

Network Ports

and Cages |

1x QSFP56 |

1x QSFP56 |

2x QSFP56 |

1x QSFP56 |

2x QSFP56 |

Host Interface

(PCIe) |

PCIe Gen 3.0/4.0 x16 |

PCIe Gen 4.0 x8 |

PCIe Gen 3.0/4.0 x16 |

PCIe Gen 3.0/4.0 x16 |

PCIe Gen 3.0/4.0 x16 |

MCX653105A-ECAT-SP Key Features

> Up to 200Gb/s connectivity per port

> Max bandwidth of 200Gb/s

> Up to 215 million messages/sec

> Extremely low latency

> Block-level XTS-AES mode hardware encryption

> Federal Information Processin Standards (FIPS) compliant

> Supports both 50G SerDes (PAM4)- and 25G SerDes (NRZ)-based ports

> Best-in-class packet pacing with subnanosecond accuracy

> PCIe Gen 3.0 and Gen 4.0 support

> In-Network Compute acceleration engines

> RoHS compliant

> Open Data Center Committee (ODCC) compatible

MCX653105A-ECAT-SP KEY APPLICATIONS

>Industry-leading throughput, low CPU utilization, and high message rate

> High performance and intelligent fabric for compute and storage infrastructures

> Cutting-edge performance in virtualized networks, including network function virtualization (NFV)

> Host chaining technology for economical rack design

> Smart interconnect for x86, Power, Arm, GPU, and FPGA-based compute and storage platforms

> Flexible programmable pipeline for new network flows

> Efficient service chaining enablement

> Increased I/O consolidation, reducing data center costs and complexity

High Performance Computing Environments

With its NVIDIA In-Network Computing and In-Network Memory capabilities,ConnectX-6 offloads computation even further to the network, saving CPU cyclesand increasing network efficiency. ConnectX-6 utilizes remote direct memory access(RDMA) technology as defined in the InfiniBand Trade Association (IBTA) specification,delivering low latency, and high performance. ConnectX-6 enhances RDMA networkcapabilities even further by delivering end-to-end packet-level flow control.

Machine Learning and Big Data Environments

Data analytics has become an essential function within many enterprise datacenters, clouds, and hyperscale platforms. Machine learning (ML) relies onespecially high throughput and low latency to train deep neural networks andimprove recognition and classification accuracy. With its 200Gb/s throughput,ConnectX-6 is an excellent solution to provide ML applications with the levels ofperformance and scalability that they require.

Security Including Block-Level Encryption

ConnectX-6 block-level encryption offers a critical innovation to network security.As data in transit is stored or retrieved, it undergoes encryption and decryption.ConnectX-6 hardware offloads the IEEE AES-XTS encryption/decryption from theCPU, saving latency and CPU utilization. It also guarantees protection for userssharing the same resources through the use of dedicated encryption keys.By performing block storage encryption in the adapter, ConnectX-6 eliminates theneed for self-encrypted disks. This gives customers the freedom to choose theirpreferred storage device, including byte addressable and NVDIMM devices thattraditionally do not provide encryption. Moreover, ConnectX-6 can offer FederalInformation Processing Standards (FIPS) compliance.

Bring NVMe-oF to Storage Environments

NVMe storage devices are gaining momentum, offering very fast access to storage media.The evolving NVMe over Fabrics (NVMe-oF) protocol leverages RDMA connectivity toremotely access NVMe storage devices efficiently, while keeping the end-to-end NVMemodel at lowest latency. With its NVMe-oF target and initiator offloads, ConnectX-6brings further optimization to NVMe-oF, enhancing CPU utilization and scalability.

Portfolio of Smart Adapters

ConnectX-6 is available in two form factors: low-profile stand-up PCIe and OpenCompute Project (OCP) Spec 3.0 cards with QSFP connectors. Single-port, HDR, stand-upPCIe adapters are available based on either ConnectX-6 or ConnectX-6 DE (ConnectX-6Dx enhanced for HPC applications).In addition, specific PCIe stand-up cards are available with a cold plate for insertion intoliquid-cooled Intel Server System D50TNP platforms.